作者:一木不

原文:https://zhuanlan.zhihu.com/p/2004262797710738371

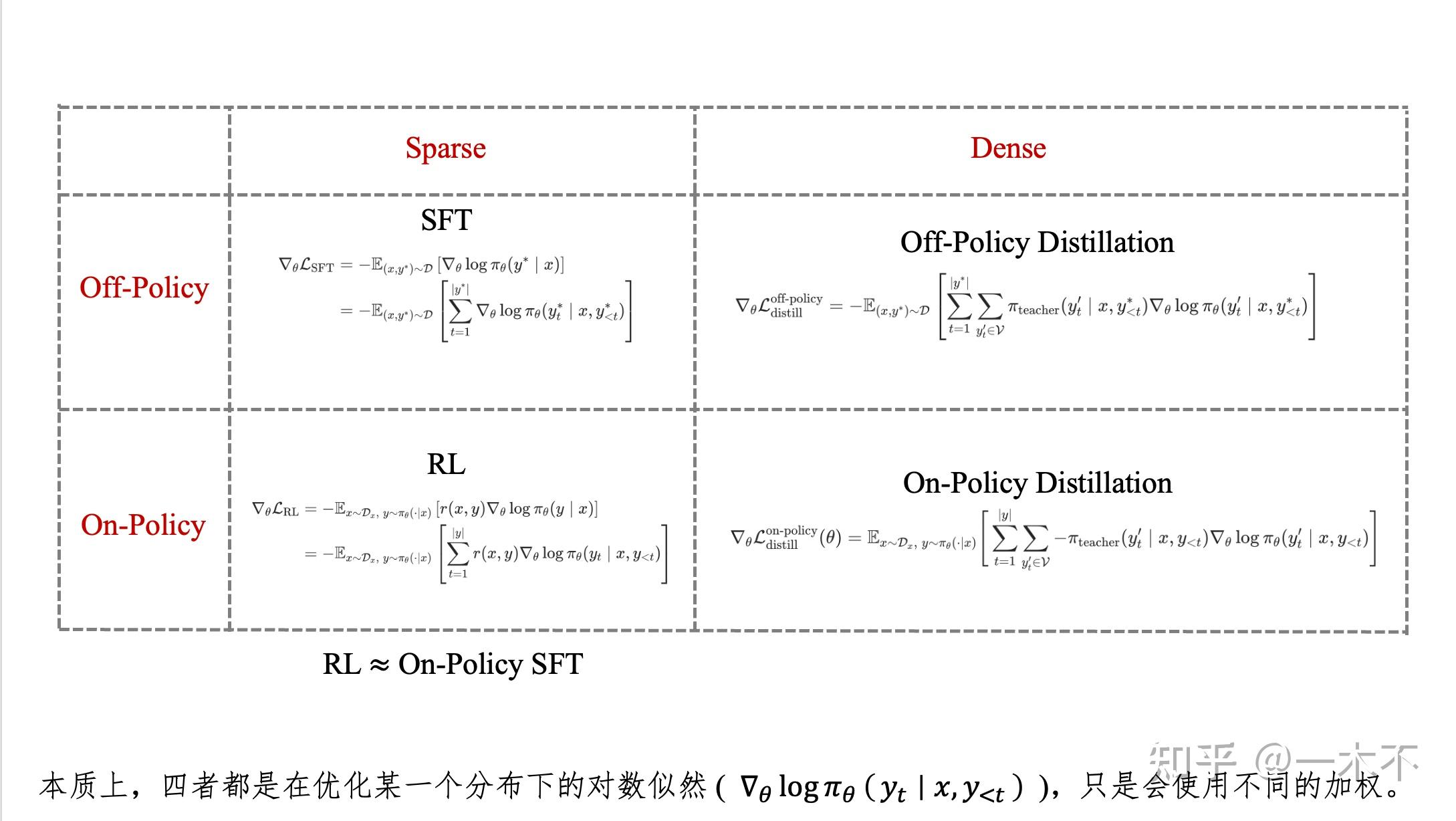

我们在此讨论SFT,Off-Policy Distillation,RL,On-Policy Distillation之间的联系和区别。

在RL没火的时候,我们提到distillation几乎都是Off-Policy Distillation。SFT和Off-Policy Distillation都是Off-Policy的,并且Off-Policy Distillation训出的模型肯定比SFT好。

RL火了之后,我们提到distillation逐渐变成了全部都是On-Policy Distillation。RL和On-Policy Distillation 都是On-Policy的,并且On-Policy Distillation比RL更有优势。

Off-Policy Distillation到On-Policy Disitllation好理解,核心区别是student策略和teacher策略在不同的数据集下进行对齐。SFT到RL怎么理解呢?我的想法是RL相当于On-Policy SFT ,可以从策略梯度上得到论证。

1. Objective回顾

1.1 SFT(Off-Policy SFT)

SFT的objective旨在最大化在Off-Policy数据集上的负对数似然:

\mathcal{L}_{\text{SFT}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ - \log \pi_\theta(y^* \mid x) \right]

展开有:

\mathcal{L}_{\text{SFT}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \sum_{t=1}^{|y^*|} -\log \pi_\theta(y^*_t \mid x, y^*_{<t}) \right]

1.2 Off-Policy Distillation

Off-Policy Distillation旨在最小化 学生策略 \pi_\theta 与教师策略 \pi_{\text{teacher}} 在固定数据集 \mathcal{D} 上的KL散度:

\mathcal{L}_{\text{distill}}^{\text{off-policy}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \mathrm{KL}\Big( \pi_{\text{teacher}}(y^* \mid x) \,\|\, \pi_\theta(y^* \mid x) \Big) \right]

展开有:

\mathcal{L}_{\text{distill}}^{\text{off-policy}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \sum_{t=1}^{|y^*|} \mathrm{KL}\Big( \pi_{\text{teacher}}(\cdot \mid x, y^*_{<t}) \,\|\, \pi_\theta(\cdot \mid x, y^*_{<t}) \Big) \right]

KL散度很多种,也有很多研究,最常见的是Forward KLD。在Forward KLD下,KL散度等价为交叉熵:

\mathcal{L}_{\text{distill}}^{\text{off-policy}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \sum_{t=1}^{|y^*|} \sum_{y_t \in \mathcal{V}} -\pi_{\text{teacher}}(y_t \mid x, y^*_{<t}) \log \pi_\theta(y_t \mid x, y^*_{<t}) \right]

1.3 RL (本质上是On-Policy SFT)

RL的objective是最大化在一个环境下的奖励:

\mathcal{L}_{\text{RL}}(\theta) = \mathbb{E}_{x \sim \mathcal{D}_x, y \sim \pi_\theta(\cdot \mid x)} \left[ r(x,y) \right]

特别地,GRPO的reward估计采用sequence-level的组内优势:

\mathcal{L}_{\text{GRPO}}(\theta) = -\mathbb{E}_{x \sim \mathcal{D},\, y \sim \pi_\theta(\cdot \mid x)} \left[ \min \left( \rho_t(\theta) \hat{A}(x, y),\; \text{clip}\big(\rho_t(\theta), 1-\epsilon, 1+\epsilon\big) \hat{A}(x, y) \right) \right]

其中

\rho_t(\theta) = \frac{\pi_\theta(y_t \mid x, y_{<t})}{\pi_{\theta_{\text{old}}}(y_t \mid x, y_{<t})}

是重要性采样比率。

1.4 On-Policy Distillation

On-Policy Distillation旨在最小化学生策略 \pi_\theta 与教师策略 \pi_{\text{teacher}} 在学生策略自身生成的轨迹分布上 的KL散度:

\mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) = \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \mathrm{KL}\Big( \pi_{\text{teacher}}(y \mid x) \,\|\, \pi_\theta(y \mid x ) \Big) \right]

展开为:

\mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) = \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} \mathrm{KL}\Big( \pi_{\text{teacher}}(\cdot \mid x, y_{<t}) \,\|\, \pi_\theta(\cdot \mid x, y_{<t}) \Big) \right]

采用Forward KL,但是有策略梯度不能直接写成CE:

\mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) = \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} \sum_{y_t \in \mathcal{V}} \pi_{\text{teacher}}(y_t \mid x, y_{<t}) \log \frac{\pi_{\text{teacher}}(y_t \mid x, y_{<t})}{\pi_\theta(y_t \mid x, y_{<t})} \right]

但是On-Policy通常不考虑这一项策略梯度(认为采样过程是stop gradient的),于是:

\mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) = \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} \sum_{y_t \in \mathcal{V}} -\pi_{\text{teacher}}(y_t \mid x, y_{<t}) \log {\pi_\theta(y_t \mid x, y_{<t})} \right]

2. 梯度对比

直观上上面四个目标联系少,我们从梯度的角度考虑四个的关系

2.1 SFT

Objective:

\mathcal{L}_{\text{SFT}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ - \log \pi_\theta(y^* \mid x) \right]

梯度推导:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{SFT}} &= -\mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \nabla_\theta \log \pi_\theta(y^* \mid x) \right] \\ &= -\mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \sum_{t=1}^{|y^*|} \nabla_\theta \log \pi_\theta(y^*_t \mid x, y^*_{<t}) \right] \\ \end{aligned}

2.2 Off-Policy Distillation

Objective:

\mathcal{L}_{\text{distill}}^{\text{off-policy}}(\theta) = \mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \sum_{t=1}^{|y^*|} \sum_{y_t \in \mathcal{V}} -\pi_{\text{teacher}}(y_t \mid x, y^*_{<t}) \log \pi_\theta(y_t \mid x, y^*_{<t}) \right]

梯度推导:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{off-policy}} &= -\mathbb{E}_{(x, y^*) \sim \mathcal{D}} \left[ \sum_{t=1}^{|y^*|} \sum_{y_t' \in \mathcal{V}} \pi_{\text{teacher}}(y_t' \mid x, y^*_{<t}) \nabla_\theta \log \pi_\theta(y_t' \mid x, y^*_{<t}) \right] \\ \end{aligned}

2.3 RL

Objective:

\mathcal{L}_{\text{RL}}(\theta) = -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ r(x, y) \right]

梯度推导(策略梯度定理,分布中有\theta ):

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{RL}} &= -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ r(x, y) \nabla_\theta \log \pi_\theta(y \mid x) \right] \\ &= -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} r(x, y) \nabla_\theta \log \pi_\theta(y_t \mid x, y_{<t}) \right] \end{aligned}

GRPO:

\nabla_\theta \mathcal{L}_{\text{RL}} = -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} \hat{A}(x, y_{\leq t}) \nabla_\theta \log \pi_\theta(y_t \mid x, y_{<t}) \right]

其中 \hat{A}(x, y_{\leq t}) 是t时刻的(组内)优势估计。

2.4 On-Policy Distillation

Objective:

\mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) = \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} \sum_{y_t \in \mathcal{V}} -\pi_{\text{teacher}}(y_t \mid x, y_{<t}) \log \pi_\theta(y_t \mid x, y_{<t}) \right]

梯度推导(策略梯度定理,分布中有\theta ):

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) &= \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \left( \sum_{t=1}^{|y|} \nabla_\theta \log \pi_\theta(y_t \mid x, y_{<t}) \right) \cdot \left( \sum_{t'=1}^{|y|} \sum_{y_{t'}' \in \mathcal{V}} -\pi_{\text{teacher}}(y_{t'}' \mid x, y_{<t'}) \log \pi_\theta(y_{t'}' \mid x, y_{<t'}) \right) \\ &\quad + \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} -\pi_{\text{teacher}}(y_t' \mid x, y_{<t}) \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

记

\begin{aligned} \mathrm{KL} &= \sum_{t'=1}^{|y|} \sum_{y_{t'}' \in \mathcal{V}} -\pi_{\text{teacher}}(y_{t'}' \mid x, y_{<t'}) \log \pi_\theta(y_{t'}' \mid x, y_{<t'}) \end{aligned}

理论上上面是KL,但是简化成CE了。则梯度可以化简为:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) &= \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \sum_{t=1}^{|y|} \mathrm{KL} \cdot \nabla_\theta \log \pi_\theta(y_t \mid x, y_{<t}) \\ &\quad + \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} -\pi_{\text{teacher}}(y_t' \mid x, y_{<t}) \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

但是第一项通常不优化(采样过程在蒸馏下被认为是stop gradient的),因此:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) &= \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} -\pi_{\text{teacher}}(y_t' \mid x, y_{<t}) \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

2.5 结论

SFT与Off-Policy Distillation:

在\log \pi_\theta(y_t \mid x, y_{<t}) 的基础上,SFT直接使用one-hot,Off-Policy Disitllation则是使用教师模型输出的分布进行加权

RL与On-Policy Distillation:

RL直接使用Reward对\log \pi_\theta(y_t \mid x, y_{<t}) 进行one-hot加权,只有被采样到的才有reward,而On-Policy Distillation则更加密集。

[注]:本文讨论Forward KL,因此是使用teacher加权,如果是Reverse KL则是student加权,并且后面还会有一项。

3. 将梯度统一到On-Policy下

SFT和Off-Policy Distillation的期望都是从Off-Policy的Data中采样,和On-Policy不太可比,因此我们使用重要性采样进行变形:

\mathbb{E}_{(x, y^*) \sim \mathcal{D}}[\cdot] = \mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \frac{\mathcal{I}(y = y^*)}{\pi_\theta(y \mid x)} \cdot \right]

其中 \mathcal{I}(y = y^*) 是指示函数。

SFT 梯度可重写为:

\nabla_\theta \mathcal{L}_{\text{SFT}} = -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[\sum_{t=1}^{|y|} \frac{\mathcal{I}(y = y^*)}{\pi_\theta(y \mid x)} \cdot \nabla_\theta \log \pi_\theta(y_t \mid x, y_{<t}) \right]

Off-Policy Distillation梯度可重写为:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{off-policy}} &= -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} \frac{\mathcal{I}(y = y^*)}{\pi_\theta(y \mid x)} \cdot \pi_{\text{teacher}}(y_t' \mid x, y_{<t}) \cdot \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

RL的梯度为:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{RL}} = -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \left[ \sum_{t=1}^{|y|} r(x, y) \nabla_\theta \log \pi_\theta(y_t \mid x, y_{<t}) \right] \end{aligned}

On-Policy Distillation的梯度为:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) &= -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} \pi_{\text{teacher}}(y_t' \mid x, y_{<t}) \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

所以上面四个目标的不同之处在于,

\nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t})

前面的内容不同。SFT和RL是稀疏的,Distillation是稠密的。SFT可以视为奖励模型是\mathcal{I}(y = y^*) 的稀疏RL [4]。

4. 补充在Reverse KL下Distillation策略的梯度

Off-Policy Distillation梯度可重写为:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{off-policy}} &= -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} \frac{\mathcal{I}(y = y^*)}{\pi_\theta(y \mid x)} \cdot \log \frac{\pi_\theta(y_t' \mid x, y_{<t})}{\pi_{\text{teacher}}(y_t' \mid x, y_{<t})} \cdot \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

On-Policy Distillation的梯度为:

\begin{aligned} \nabla_\theta \mathcal{L}_{\text{distill}}^{\text{on-policy}}(\theta) &= -\mathbb{E}_{x \sim \mathcal{D}_x,\; y \sim \pi_\theta(\cdot \mid x)} \Bigg[ \sum_{t=1}^{|y|} \sum_{y_t' \in \mathcal{V}} \log \frac{\pi_\theta(y_t' \mid x, y_{<t})}{\pi_{\text{teacher}}(y_t' \mid x, y_{<t})} \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) \Bigg] \end{aligned}

也是含有 \nabla_\theta \log \pi_\theta(y_t' \mid x, y_{<t}) ,只是前面所有的加权不一样。

Reference

[1] Self-Distilled Reasoner: On-Policy Self-Distillation for Large Language Models(On-policy和SFT)

https://arxiv.org/html/2601.18734v1

[2] Self-Distillation Enables Continual Learning(On-policy和SFT)

https://arxiv.org/html/2601.19897v1

[3] Reinforcement Learning via Self-Distillation(On-policy和RL)

https://arxiv.org/html/2601.20802v1

[4] On the Generalization of SFT: A Reinforcement Learning Perspective with Reward Rectification(SFT和RL)

https://arxiv.org/abs/2508.05629